Where Marketing Automation Actually Creates Revenue

Marketing is where GenAI gets both overhyped and underused. Overhyped because companies adore fast copy generation and confuse speed with growth. Underused because the real money isn't in having the model write ten more subject lines. It's in connecting customer signals, campaign logic, creative variation, merchandising decisions, and sales follow-up so the right offer reaches the right buyer at the right time.

P&G's turnaround is the clean example. Its early pilots reportedly saw about 80% abandonment because the data lived in silos and nobody could connect model output to business performance. The later fix was much sharper: integrate LLM-driven decisioning with supply chain and customer data, then hold teams to revenue metrics. That shift helped generate about $50 million in marketing personalization revenue.

"Use AI to connect web behavior, email engagement, call notes, and CRM changes so follow-up happens when buying intent is warm."

For teams building demand engines, the sweet spot is orchestration. Use blog automation to speed research, outlines, refreshes, and internal linking—but only when it plugs into a real content strategy built around search intent, product proof, and conversion paths. Use AI in social media marketing to test hooks, summarize audience feedback, and triage community questions—but don't let it turn every post into the same bland slurry.

10 Hot AI Plays Worth Scaling Right Now

If you want GenAI to earn its keep, stop hunting for one giant moonshot. Build a portfolio of revenue-linked use cases across operations, sales, service, and growth. The hottest bets in 2026 aren't random toys; they're workflows where better decisions happen faster, with cleaner context and lower labor drag.

The Short List

- AI sales copilots inside the CRM that surface next-best actions, draft account briefs, and flag stalled deals before the quarter slips away.

- Agentic customer service flows that resolve simple issues automatically, then tee up cross-sell or renewal opportunities for a human rep.

- Dynamic pricing and promotion engines that respond to demand, competitor behavior, and inventory constraints in near real time.

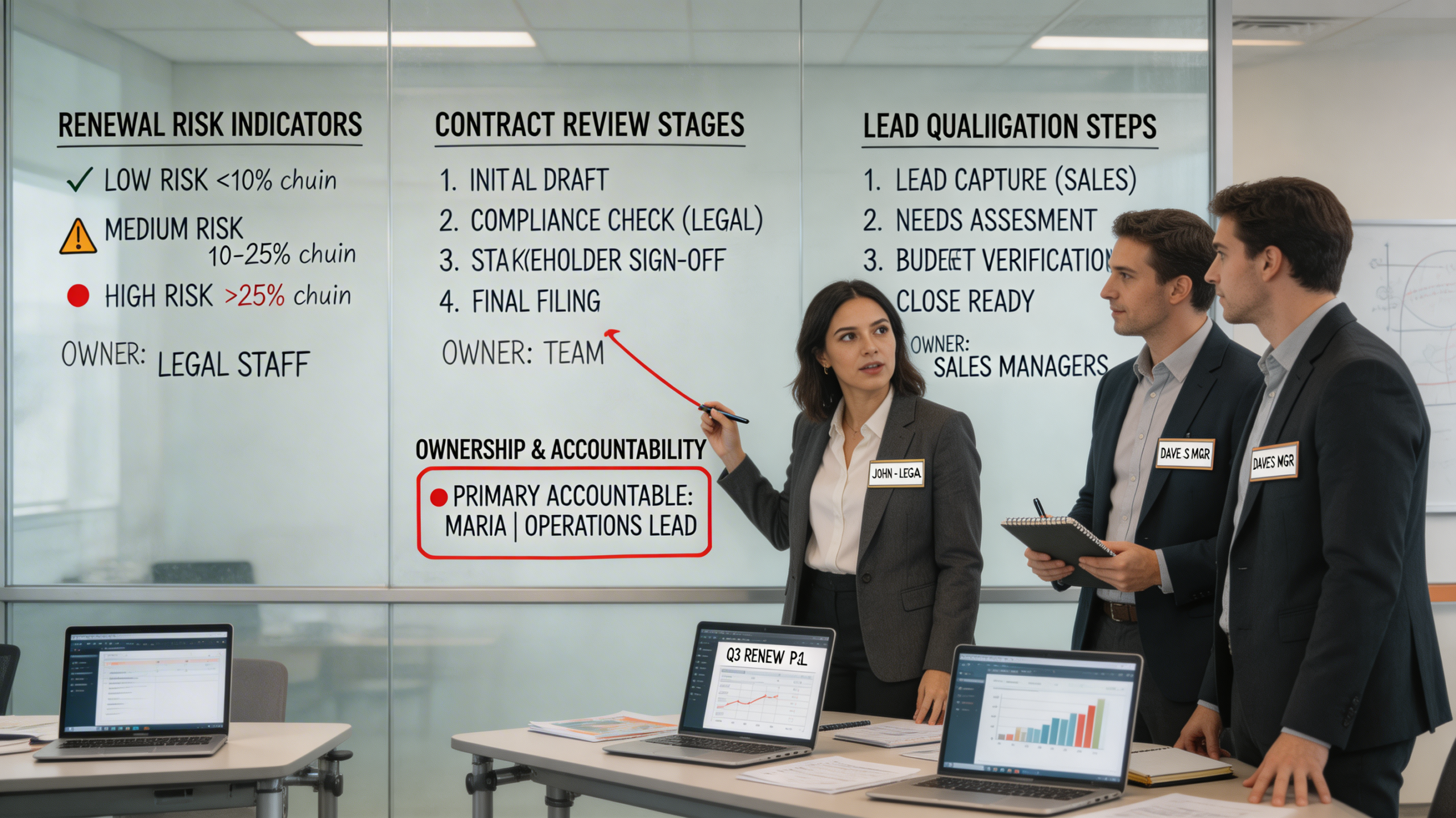

- Contract review and revenue leakage detection for sales, procurement, and legal teams drowning in clauses, exceptions, and missed renewals.

- Demand forecasting linked to inventory allocation so product availability supports revenue instead of sabotaging it.

- Procurement and supplier negotiation agents that identify cost anomalies, shorten cycle times, and protect gross margin.

- Creative testing at scale for paid media, landing pages, and lifecycle campaigns, with guardrails against brand drift and compliance issues.

- Voice-of-customer mining across calls, chats, reviews, and support tickets to expose churn drivers and hidden upsell signals.

- Knowledge copilots for field teams, account managers, and service staff who need instant answers pulled from manuals, policies, and customer history.

- RAG and synthetic-data programs for high-value domains where privacy, sparse data, or regulated content would otherwise block deployment.

Notice what these have in common. They sit inside real workflows, they rely on proprietary context, and they connect to cash. Some grow top-line revenue directly. Some protect margin. Some do both. That's why the old vanity metrics have to go. Measure marginal revenue lift, CAC payback, conversion to qualified opportunity, average handling time with quality controls, renewal probability, and gross margin improvement.

The message from McKinsey, and from the ugly pile of abandoned pilots behind it, is straightforward: GenAI doesn't fail because businesses lack imagination. It fails because they confuse experimentation with execution. The winners treat AI as a profit center, wire it into the bloodstream of the business, and insist on commercial proof early. That can happen in a global bank, a manufacturer, or any company. Same rule everywhere. Find the bottleneck. Attach a number to it. Build the workflow. Then let the model earn its seat.